GPT-4.5: "Not a frontier model"?

Estimates place GPT-4.5 as about an order of magnitude more compute than GPT-4. These are not based on any released numbers, but given a combination of a bigger dataset and parameters (5X parameters + 2X dataset size = 10X compute), the model could be in in the ballpark of 5-7T parameters total, which if it had a similar sparsity factor to GPT-4 would be ~600B active parameters.

...

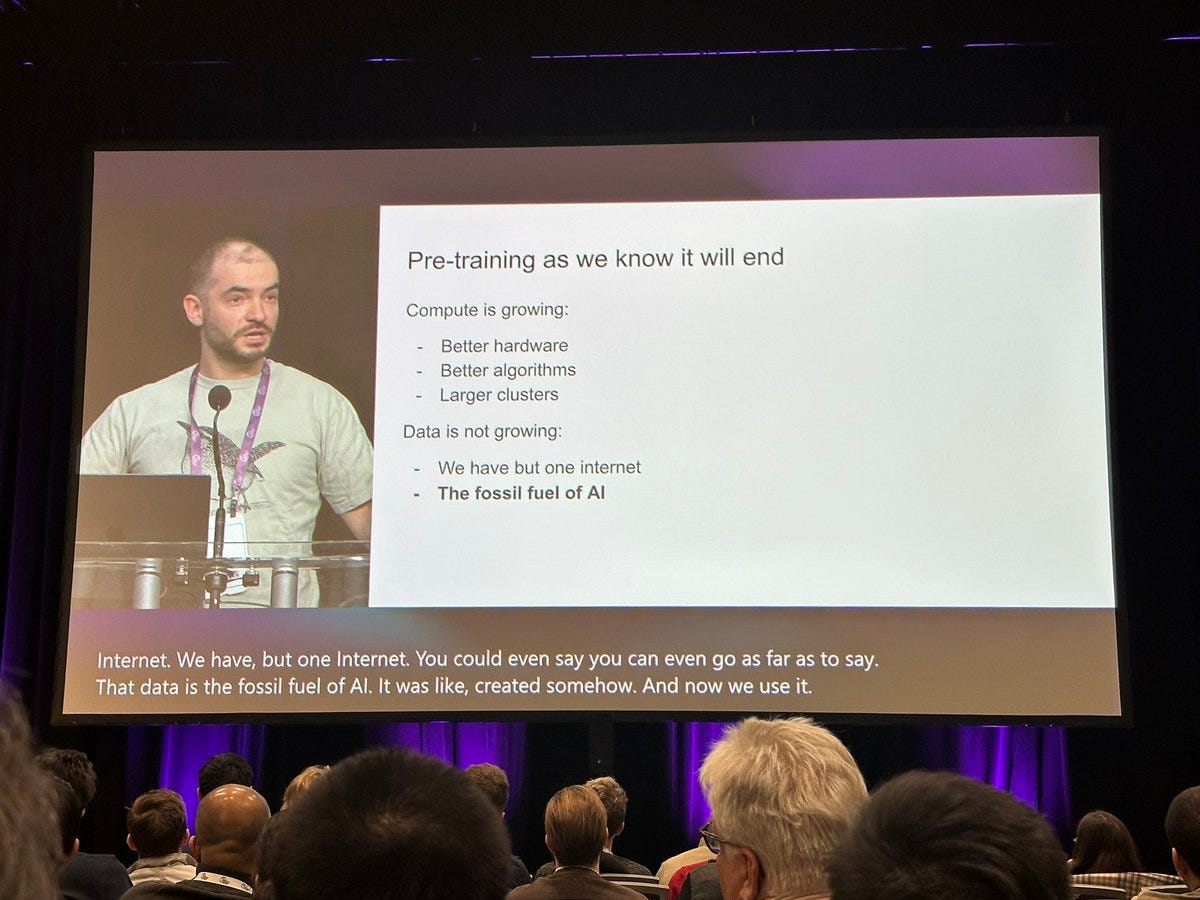

Scaling language models is not dead. Still, reflecting on why this release felt so weird is crucial to staying sane in the arc of AI’s progress. We’ve entered the era where trade-offs among different types of scaling are real.

- The reason for releasing 4.5 might be because scaling price down might be getting more difficult

- Deepseek forced o3-mini to be offered at a ridiculas price

- Releasing the next 4o or o3 model at the same price with minor improvements would look like exactly that

- However, if 4.5 is offered at $150 and 4.5o comes out in a few months for $1.5 then that would get people talking